AI platform · 2025 Live

Axon

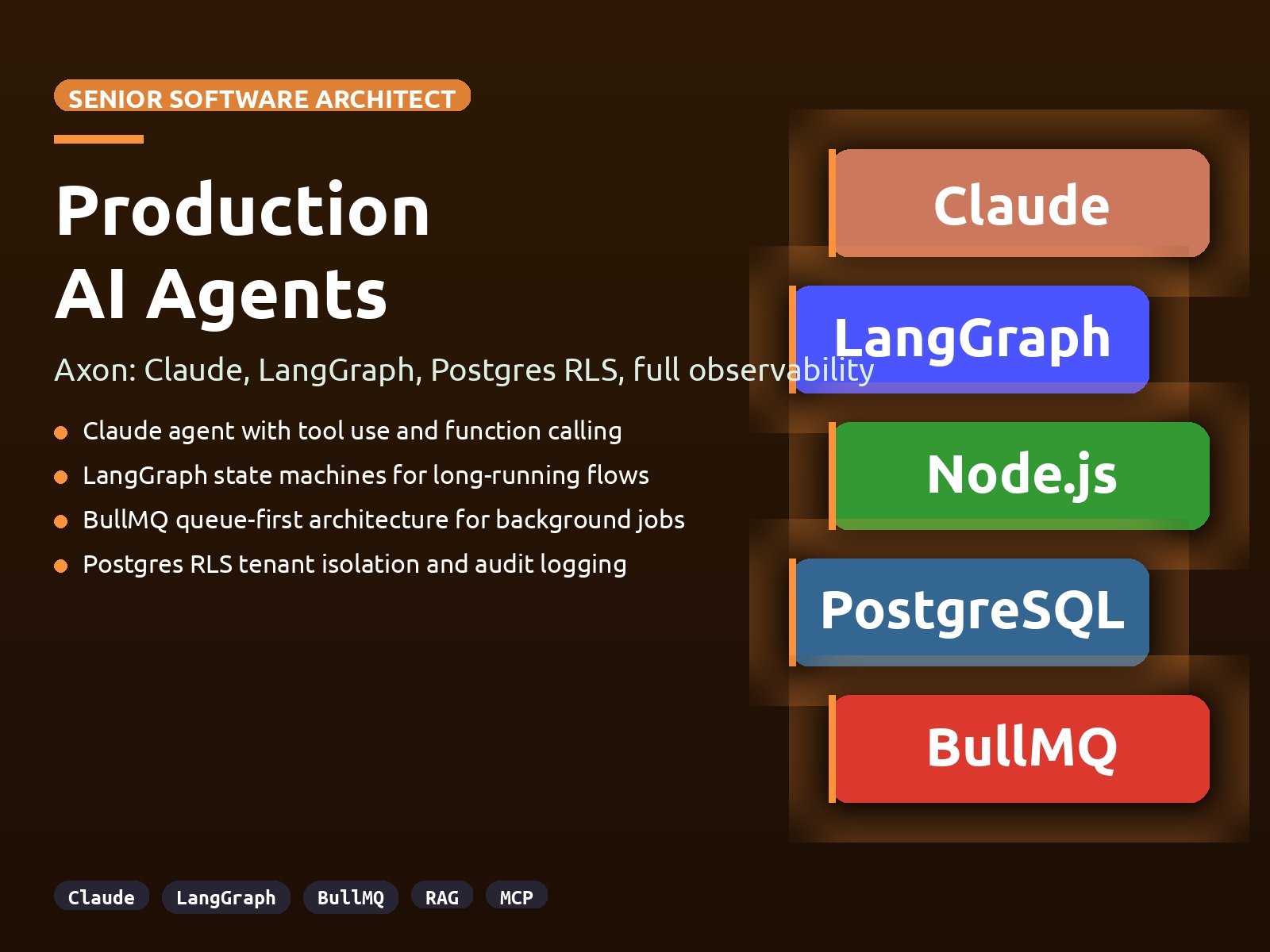

Multi-tenant AI agentic SaaS with LangGraph, RAG, MCP, and production observability.

Tech stack

Next.js 15 Fastify 5 Python LangGraph FastAPI PostgreSQL 16 pgvector Redis BullMQ Drizzle ORM Better Auth Langfuse Prometheus Grafana Loki

The problem

AI agents in production need audit trails, cost control, and tenant isolation that tutorials skip. Most open-source agent frameworks assume a single user and a single wallet. Real multi-tenant AI platforms have to account for per-tenant spend caps, per-tenant data isolation, and full observability on tokens, latency, and errors.

Goals

- Queue-first architecture so long-running agent work never blocks a request

- Runtime row-level security so tenant isolation survives a leaky query

- Full observability stack covering traces, metrics, logs, and LLM-specific telemetry

- MCP tool integration so agents can reach into databases and internal APIs cleanly

- Billing that tracks real token usage, not estimated seat counts

The solution

- LangGraph agents with streaming chat end-to-end

- RAG pipeline with upload, chunk, embed, and hybrid search over pgvector

- MCP servers (postgres and a custom template) with an agent bridge

- Runtime RLS via the axon_app role and a withOrg pattern on every query

- BullMQ workers with a Bull Board admin UI for queue visibility

- Prometheus, Grafana, Loki, and Langfuse wired end-to-end for observability

- Stripe billing plus CI/CD and production deploy scaffolding

My role

- → Architecture across Next.js 15, Fastify, and Python FastAPI services

- → Drizzle schema design with runtime RLS enforcement

- → LangGraph agent graphs and streaming chat pipeline

- → RAG ingestion pipeline and hybrid search

- → Observability stack integration and dashboards

- → Deployment on Oracle Cloud with Caddy and Cloudflare Tunnel

UI direction

Admin-first aesthetic with a focus on queue and telemetry visibility over chrome. Streaming chat UI built for long-running agent loops, not for casual chat.

User flows

Agentic chat flow

- 1 User sends a message in a streaming chat session

- 2 Request enters the queue with tenant scoping applied

- 3 Worker runs the LangGraph agent with tools bound via MCP

- 4 Retrieval hits pgvector and full-text search in parallel

- 5 Response streams back with token usage logged to Langfuse

Document ingestion

- 1 User uploads a document through the app

- 2 File is chunked and embedded in a worker

- 3 Chunks land in pgvector with tenant scoping

- 4 Hybrid retrieval becomes available to the agent

Screenshots

Click any image to open at full size.

Key learnings

- Runtime RLS via a dedicated Postgres role is the most defensible tenant isolation pattern for AI workloads

- Queue-first design is not optional once you run agent loops with tool calls

- Langfuse pays for itself in one debugging session when an agent burns tokens on the wrong path

- MCP is the right abstraction for giving agents access to databases and internal APIs without leaking credentials

Want something like Axon?

I'm open to senior contract work. Let's talk about what you're building.

Get in touch